Last week’s post on my performance comparison tests stimulated quite a lot of discussion on the blog and Twitter, not least about the large disparity in index sizes (and many thanks to everyone who contributed to this!) The Elasticsearch index was apparently nearly twice the size of the Solr index (the performance was also roughly double). In the end, it seems that the most likely reason for the apparent size difference was that I caught ES in the middle of a Lucene merge operation (this was suggested by my colleague @romseygeek). Unfortunately I’d deleted the EBS volume by this point, so it was impossible to confirm.

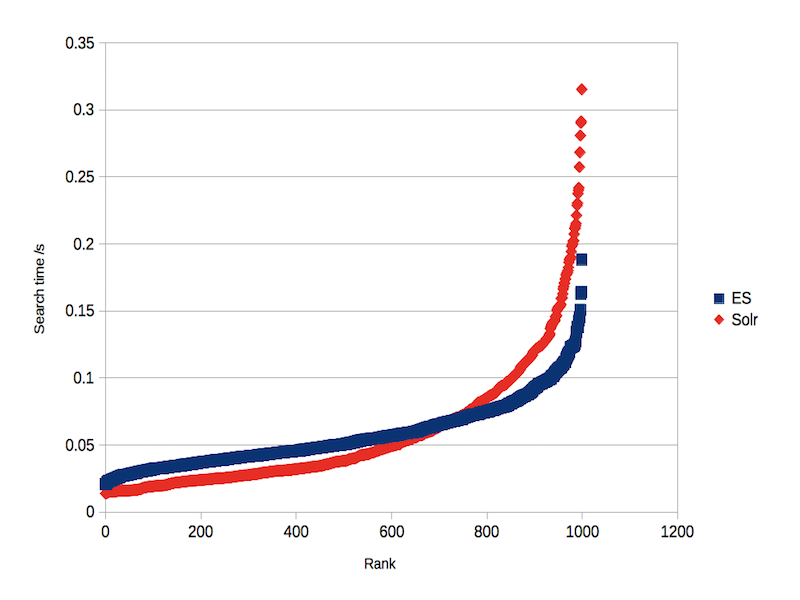

I thought it might be interesting to compare the performance of just a single shard on a single node, with 2M documents. DocValues were enabled for the non-analysed Solr field. The index sizes were indeed close to identical, at 3.6GB (and the indexing process took 18m47s for ES, 18m05s for Solr). The mean search times were also the same, at 0.06s with concurrent indexing and 0.04s without. However, the distribution of search times was quite different (see figures 1 and 2). ES had a flatter distribution, which meant that 99% of searches took less than 0.08s compared with 0.15s for Solr (without concurrent indexing).

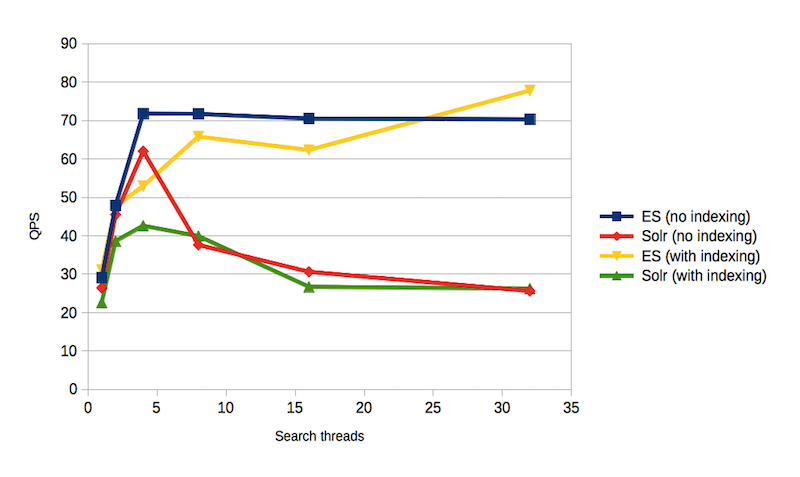

The most dramatic difference was in QPS. As in the previous study, ES supported approximately twice the QPS of Solr (see figure 3). So, although the change in index size and node topology has altered the results, the performance advantage still seems to be with ES.

I’m not sure there is much point continuing these tests, as they don’t have particularly significant implications for real world applications. It was quite fun to do, though, and it’s clear that ES has greatly improved its performance relative to Solr compared with our 2014 study.

Your 2014 study was flawed. There are multiple pull requests open from last year that negated any performance difference Solr had over Elasticsearch.

This year you used the same flawed code without addressing any of the issues. Elasticsearch is smarter and was able to handle your bad queries better. Even your Solr queries were not optimal last year and can be improved even more this year using the new “filter()” operator available in 5.4.

Im guessing that if you were to address the issues raised in the PR’s and update Solr to use the new filter operator for each “OR” filter your performance would be very similar if not identical.

It’s true when you say these test’s “don’t have particularly significant implications for real world applications”, but the reality is you are out here making claims that are most likely false due to poor test setup.

Thanks for your comments Matt. If we have time, we’ll try to incoporate your pull requests and see if they make a difference.